Hadoop 3.x(MapReduce)----【MapReduce 框架原理 三】

- 1. OutputFormat接口实现类

- 2. 自定义OutputFormat案例实操

- 1. 需求

- 2. 需求分析

- 3. 案例实操

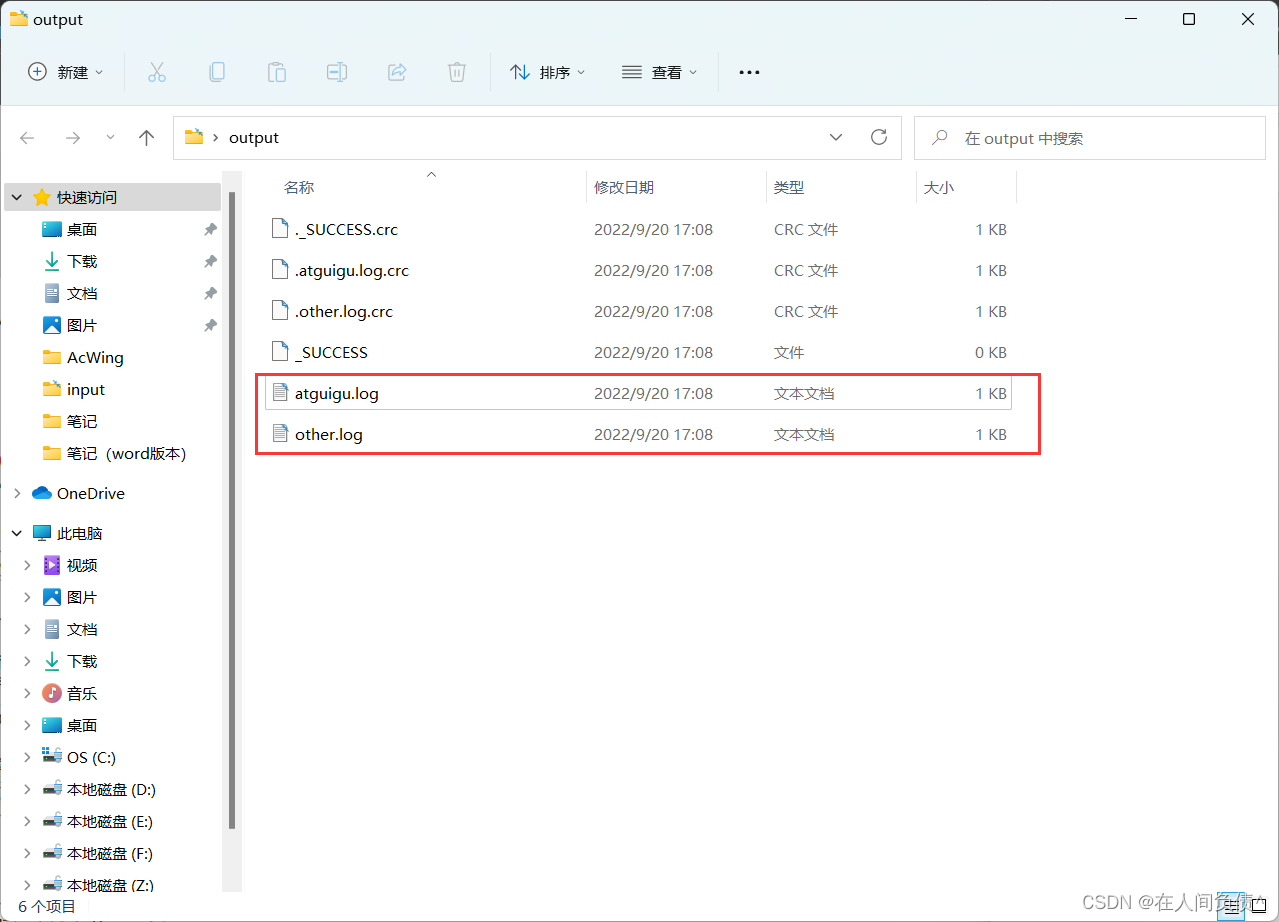

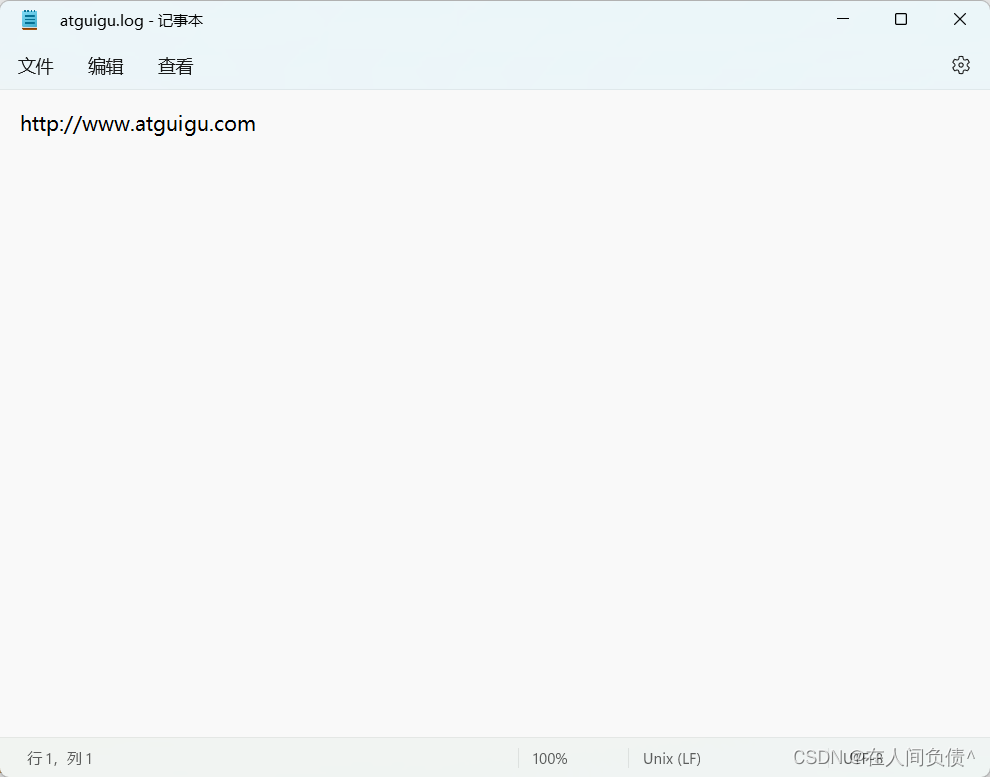

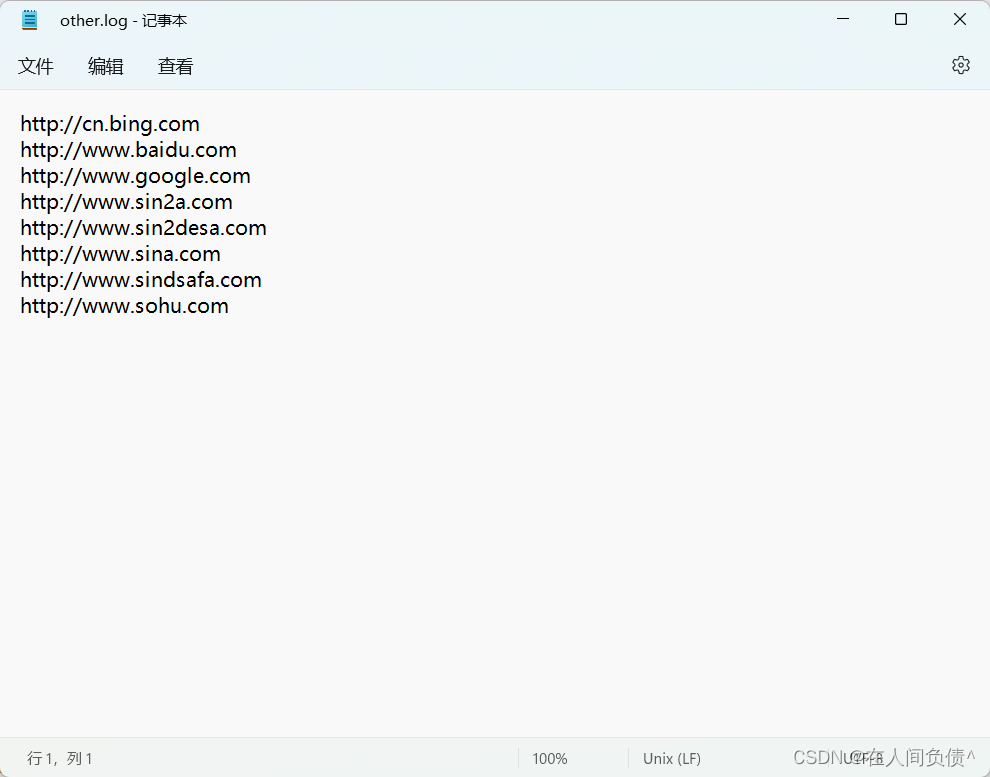

- 4. 测试输出结果

1. OutputFormat接口实现类

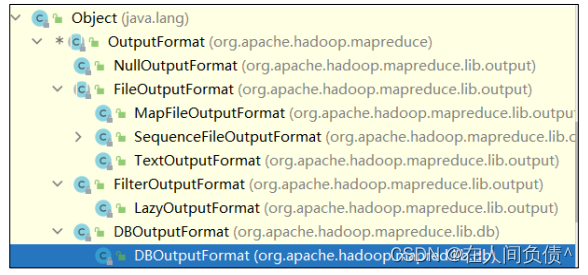

OutputFormat 是 MapReduce 输出的基类,所有实现 MapReduce 输出都实现了 OutputFormat 接口。下面我们介绍几种常见的 OutputFormat 实现类。

1. OutputFormat 实现类

2. 默认输出格式 TextOutputFormat

3. 自定义 OutputFormat

- 应用场景:输出数据到 MySql / HBase / Elasticsearch 等存储框架中。

- 自定义 OutputFormat 步骤

- 自定义一个类继承 FileOutputFormat。

- 改写 RecordWriter,具体改写输出数据的方法 write()。

2. 自定义OutputFormat案例实操

1. 需求

过了输入的 log 日志,包含 atguigu 的网站输出到 e:/atguigu.log,不包含 atguigu 的网站输出到 e:/other.log。

- 输入数据

- 期望输出数据

2. 需求分析

3. 案例实操

- 编写 LogMapper 类

package com.fickler.mapreduce.outputformat;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

/**

* @author dell

* @version 1.0

*/

public class LogMapper extends Mapper<LongWritable, Text, Text, NullWritable> {

@Override

protected void map(LongWritable key, Text value, Mapper<LongWritable, Text, Text, NullWritable>.Context context) throws IOException, InterruptedException {

context.write(value, NullWritable.get());

}

}

- 编写 LogReducer 类

package com.fickler.mapreduce.outputformat;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

/**

* @author dell

* @version 1.0

*/

public class LogReducer extends Reducer<Text, NullWritable, Text, NullWritable> {

@Override

protected void reduce(Text key, Iterable<NullWritable> values, Reducer<Text, NullWritable, Text, NullWritable>.Context context) throws IOException, InterruptedException {

for (NullWritable value : values){

context.write(key, NullWritable.get());

}

}

}

- 自定义一个 LogOutputFormat 类

package com.fickler.mapreduce.outputformat;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.RecordWriter;

import org.apache.hadoop.mapreduce.TaskAttemptContext;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

/**

* @author dell

* @version 1.0

*/

public class LogOutputFormat extends FileOutputFormat<Text, NullWritable> {

@Override

public RecordWriter<Text, NullWritable> getRecordWriter(TaskAttemptContext taskAttemptContext) throws IOException, InterruptedException {

LogRecordWrite lrw = new LogRecordWrite(taskAttemptContext);

return lrw;

}

}

- 编写 LogRecordWriter 类

package com.fickler.mapreduce.outputformat;

import org.apache.hadoop.fs.FSDataOutputStream;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IOUtils;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.RecordWriter;

import org.apache.hadoop.mapreduce.TaskAttemptContext;

import java.io.IOException;

/**

* @author dell

* @version 1.0

*/

public class LogRecordWrite extends RecordWriter<Text, NullWritable> {

private FSDataOutputStream atguiguOut;

private FSDataOutputStream otherOut;

public LogRecordWrite(TaskAttemptContext job){

try {

FileSystem fileSystem = FileSystem.get(job.getConfiguration());

atguiguOut = fileSystem.create(new Path("C:\\Users\\dell\\Desktop\\output\\atguigu.log"));

otherOut = fileSystem.create(new Path("C:\\Users\\dell\\Desktop\\output\\other.log"));

} catch (IOException e) {

throw new RuntimeException(e);

}

}

@Override

public void write(Text text, NullWritable nullWritable) throws IOException, InterruptedException {

String log = text.toString();

if (log.contains("atguigu")){

atguiguOut.writeBytes(log + "\n");

} else {

otherOut.writeBytes(log + "\n");

}

}

@Override

public void close(TaskAttemptContext taskAttemptContext) throws IOException, InterruptedException {

IOUtils.closeStream(atguiguOut);

IOUtils.closeStream(otherOut);

}

}

- 编写 LogDriver 类

package com.fickler.mapreduce.outputformat;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

/**

* @author dell

* @version 1.0

*/

public class LogDriver {

public static void main(String[] args) throws IOException, InterruptedException, ClassNotFoundException {

Configuration configuration = new Configuration();

Job job = Job.getInstance(configuration);

job.setJarByClass(LogDriver.class);

job.setMapperClass(LogMapper.class);

job.setReducerClass(LogReducer.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(NullWritable.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(NullWritable.class);

job.setOutputFormatClass(LogOutputFormat.class);

FileInputFormat.setInputPaths(job, new Path("C:\\Users\\dell\\Desktop\\input"));

FileOutputFormat.setOutputPath(job, new Path("C:\\Users\\dell\\Desktop\\output"));

boolean b = job.waitForCompletion(true);

System.exit(b ? 0 : 1);

}

}

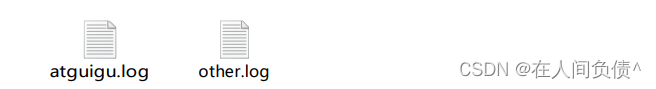

4. 测试输出结果