本文目录如下:

- 第二章 MapReduce序列化案例

- 2.1 自定义FloBean对象实现序列化接口(`Writable`)

- 2.2 序列化案例实操

- 2.3.1 需求

- 2.3.2 需求分析

- 2.3.3 编写MapReduce程序

第二章 MapReduce序列化案例

2.1 自定义FloBean对象实现序列化接口(Writable)

在企业开发中往往常用的基本序列化类型不能满足所有需求,比如在Hadoop框架内部传递一个Bean对象,那么该对象就需要实现序列化接口。

具体实现Bean对象序列化步骤如下7步。

- (1) 必须实现

Writable接口

public class FlowBean implements Writable {

...

}

- (2) 反序列化时,需要反射调用空参构造函数,所以必须有空参构造

public FlowBean() {

super();

}

- (3) 重写序列化方法

@Override

public void write(DataOutput out) throws IOException {

out.writeLong(upFlow);

out.writeLong(downFlow);

out.writeLong(sumFlow);

}

- (4) 重写反序列化方法

@Override

public void readFields(DataInput in) throws IOException {

upFlow = in.readLong();

downFlow = in.readLong();

sumFlow = in.readLong();

}

- (5) 注意反序列化的顺序和序列化的顺序完全一致

- (6) 要想把结果显示在文件中,需要重写

toString(),可用”\t”分开,方便后续用。 - (7) 如果需要将自定义的Bean放在key中传输,则还需要实现Comparable接口,因为MapReduce框中的

Shuffle过程要求对key必须能排序。详见后面排序案例。

@Override

public int compareTo(FlowBean o) {

// 倒序排列,从大到小

return this.sumFlow > o.getSumFlow() ? -1 : 1;

}

2.2 序列化案例实操

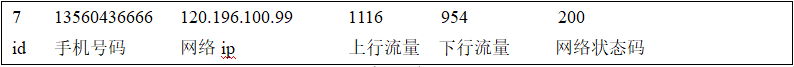

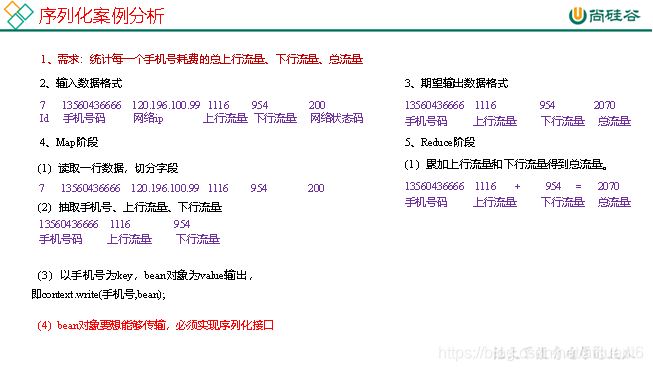

2.3.1 需求

统计每一个手机号耗费的总上行流量、下行流量、总流量。

- (1) 输入数据

从phone_data.txt文件中输入数据。 - (2) 输入数据格式:

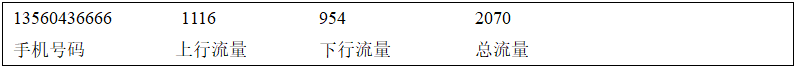

- (3) 期望输出数据格式

2.3.2 需求分析

2.3.3 编写MapReduce程序

- (1) 编写流量统计的

Bean对象

import org.apache.hadoop.io.Writable;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

public class FlowBean implements Writable {

private long upFlow;

private long downFlow;

private long sumFlow;

public FlowBean() {

}

@Override

public String toString() {

return upFlow + "\t" + downFlow + "\t" + sumFlow;

}

public void setFlow(long upFlow, long downFlow) {

this.upFlow = upFlow;

this.downFlow = downFlow;

this.sumFlow = upFlow + downFlow;

}

public long getUpFlow() {

return upFlow;

}

public void setUpFlow(long upFlow) {

this.upFlow = upFlow;

}

public long getDownFlow() {

return downFlow;

}

public void setDownFlow(long downFlow) {

this.downFlow = downFlow;

}

public long getSumFlow() {

return sumFlow;

}

public void setSumFlow(long sumFlow) {

this.sumFlow = sumFlow;

}

/*

* 序列化方法

* @Param out 框架给我们提供的数据出口

* */

@Override

public void write(DataOutput dataOutput) throws IOException {

dataOutput.writeLong(upFlow);

dataOutput.writeLong(downFlow);

dataOutput.writeLong(sumFlow);

}

/*

* 反序列化方法

* @Param in 框架给我们提供的数据l来源

* */

@Override

public void readFields(DataInput dataInput) throws IOException {

upFlow = dataInput.readLong();

downFlow = dataInput.readLong();

sumFlow = dataInput.readLong();

}

}

- (2) 编写

Mapper类

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class FlowMapper extends Mapper<LongWritable, Text, Text, FlowBean> {

private Text outK = new Text();

private FlowBean outV = new FlowBean();

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

String[] fields = value.toString().split("\t");

String phone = fields[1];

long upFlow = Long.parseLong(fields[fields.length - 3]);

long downFlow = Long.parseLong(fields[fields.length - 2]);

outK.set(phone);

outV.setFlow(upFlow, downFlow);

context.write(outK, outV);

}

}

- (3) 编写

Reducer类

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class WcReducer extends Reducer<Text, IntWritable, Text, IntWritable> {

int sum;

private IntWritable total = new IntWritable();

@Override

protected void reduce(Text key, Iterable<IntWritable> values,Context context) throws IOException, InterruptedException {

// 1 累加求和

int sum = 0;

for (IntWritable value : values) {

sum += value.get();

}

// 2 包装结果并输出

total.set(sum);

context.write(key, total);

}

}

- (4) 编写

Driver驱动类

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

public class FlowDriver {

public static void main(String[] args) throws IOException, InterruptedException, ClassNotFoundException {

// 1 获取配置信息以及封装任务

Job job = Job.getInstance(new Configuration());

// 2 设置jar加载路径

job.setJarByClass(FlowDriver.class);

// 3 设置map和reduce类

job.setMapperClass(FlowMapper.class);

job.setReducerClass(FlowReducer.class);

// 4 设置map输出

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(FlowBean.class);

// 5 设置最终输出kv类型

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(FlowBean.class);

// 6 设置输入和输出路径

FileInputFormat.setInputPaths(job, new Path(args[0]));

FileOutputFormat.setOutputPath(job, new Path(args[1]));

// 7 提交

boolean result = job.waitForCompletion(true);

System.exit(result ? 0 : 1);

}

}

声明:本文是学习时记录的笔记,如有侵权请告知删除!

原视频地址:https://www.bilibili.com/video/BV1Me411W7PV