参考 https://www.cnblogs.com/caoshouling/p/14091113.html, 做了验证,很好的文档。

1) 停止hdfs集群

2)安装配置maven

https://blog.csdn.net/hailunw/article/details/117996934

3)生成lzo压缩程序包

3.1)安装前置package

yum -y install lzo-devel zlib-devel gcc autoconf automake libtool3.2)下载lzo源文件 https://github.com/twitter/hadoop-lzo/archive/refs/heads/master.zip 到99服务器,解压缩。

wget https://github.com/twitter/hadoop-lzo/archive/refs/heads/master.zip

unzip master.zip

3.3)安装lzo

cd /home/user/hadoop-lzo-master

mkdir lzo

export CFLAGS=-m64

export CXXFLAGS=-m64

export C_INCLUDE_PATH=/usr/local/hadoop/lzo/include

export LIBRARY_PATH=/usr/local/hadoop/lzo/lib

mvn clean package -Dmaven.test.skip=true

cd target/native/Linux-amd64-64

tar -cBf - -C lib . | tar -xBvf - -C ~

cp ~/libgplcompression* $HADOOP_HOME/lib/native/

cp /home/user/hadoop-lzo-master/target/hadoop-lzo-0.4.21-SNAPSHOT.jar $HADOOP_HOME/share/hadoop/common/4)配置Hadoop

4.1)将lzo包分发到66服务器和88服务器

scp -r /home/user/hadoop-3.2.2/share/hadoop/common/hadoop-lzo-0.4.21-SNAPSHOT.jar user@192.168.1.88:/home/user/hadoop-3.2.2/share/hadoop/common/hadoop-lzo-0.4.21-SNAPSHOT.jar

scp -r /home/user/hadoop-3.2.2/share/hadoop/common/hadoop-lzo-0.4.21-SNAPSHOT.jar user@192.168.1.66:/home/user/hadoop-3.2.2/share/hadoop/common/hadoop-lzo-0.4.21-SNAPSHOT.jar

scp -r $HADOOP_HOME/lib/native/* user@192.168.1.88:$HADOOP_HOME/lib/native/

scp -r $HADOOP_HOME/lib/native/* user@192.168.1.66:$HADOOP_HOME/lib/native/

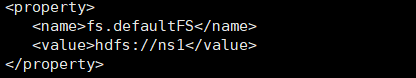

4.2)在66,88,99服务器修改配置文件core-site.xml,添加如下内容。

<property>

<name>io.compression.codecs</name>

<value>

org.apache.hadoop.io.compress.GzipCodec,

org.apache.hadoop.io.compress.DefaultCodec,

org.apache.hadoop.io.compress.BZip2Codec,

org.apache.hadoop.io.compress.SnappyCodec,

com.hadoop.compression.lzo.LzoCodec,

com.hadoop.compression.lzo.LzopCodec

</value>

</property>

<property>

<name>io.compression.codec.lzo.class</name>

<value>com.hadoop.compression.lzo.LzoCodec</value>

</property>4.3)重新启动hdfs集群